Tell me something I don’t know. Every year, this is the challenge raised by the LDV Vision Summit in front of an audience made of experts in their fields, scientists, researchers, founders, CEO’s, investors and pundits. And every year, the same reaction: Wow, I didn’t know that. The 2016 edition, which just closed a few days ago, did not disappoint. In a field where breakthroughs are seemingly far between, there is still room for anyone to discover research, studies, applications or companies that reveal yet another side of the huge impact visual tech is having on our world.

Tell me something I don’t know was also the most potent theme of the technology deep dive of the first day, brought forth by Larry Zitnick, Facebook, Artificial Intelligence Research Lead. Fresh off a keynote introduction by LDV Vision Summit organizer Evan Nisselson about the ubiquitous eye which will be everywhere – including paint as visual sensor- seamlessly helping us analyze and manage our world, Larry pushed computer vision research a step further. The issue with visual recognition today, he said, is that while extremely good at recognizing content in images, it completely fails at understanding context. He used mirrors as an example. While Facebook’s and other can easily recognize a mirror in a photograph, they all fail at recognizing a selfie taken using a mirror. That is because the mirror is actually not in the image as an object. The next step for visual recognition research, he continued, is not throwing more power at existing algorithms but rather figure out how to include meaning into recognition. It is not enough to know what it is, machines need now to learn what is the relationship between each object, like humans do.

This is a theme picked up during the next session by Serge Belongie, Prof., Computer Vision at Cornell Tech, and co-organizer of the Summit. Serge pointed out that humans didn’t so much need a machine telling them what is in an image- we are quite capable of doing on our own- but rather tell us something we don’t know. For this to happen, machine learning needs to include meaning and intent when analyzing visual content. At the rate of research, this could be something we might see at next year’s edition of the Summit. In term, this is where the real intelligence begins to enter the field of artificial intelligence.

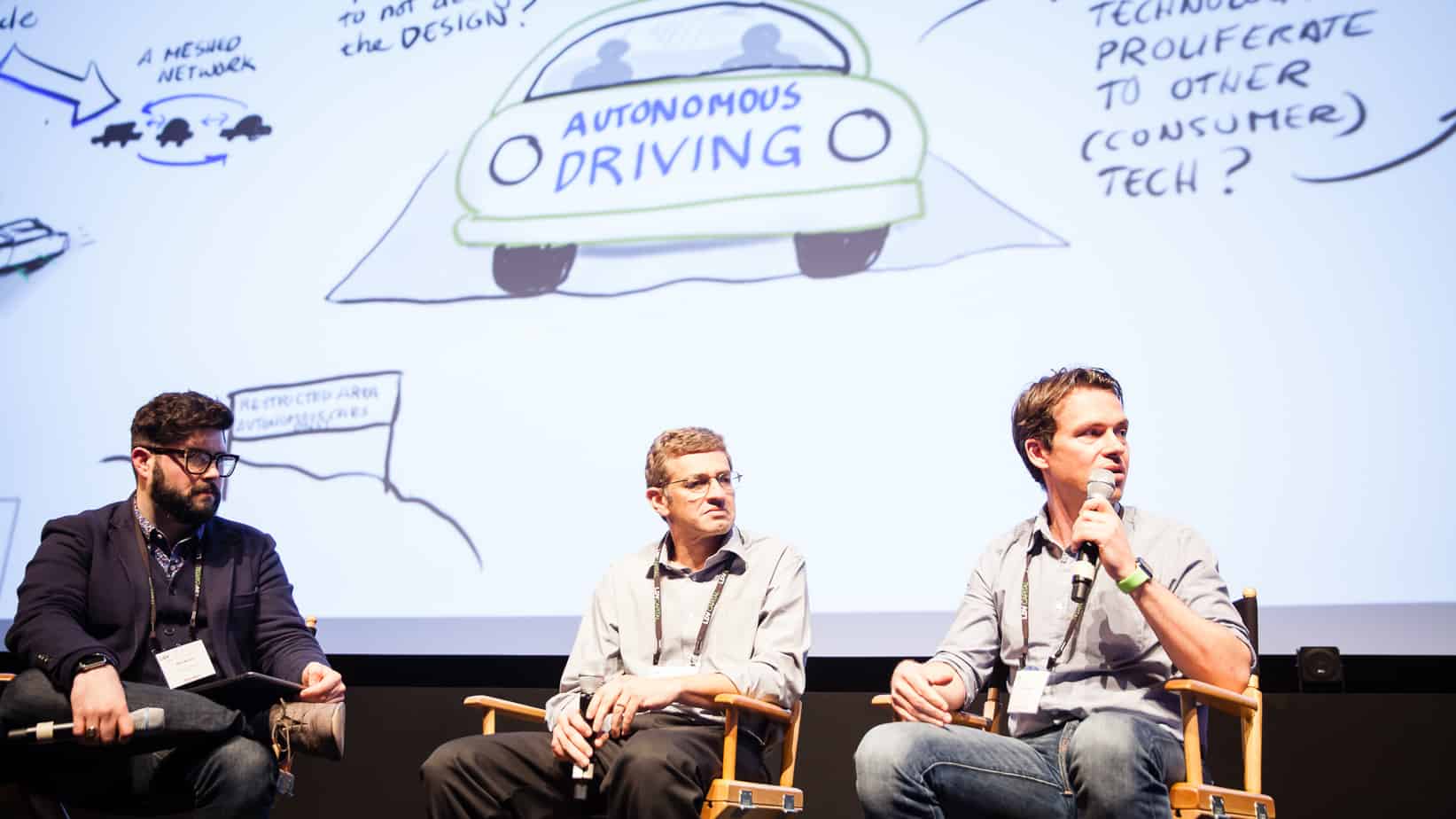

This theme also underlined the panel on Autonomous Driving later in the day, as Sanjiv Nanda, Qualcomm Research, VP, Engineering and Laszlo Kishonti, AdasWorks, CEO discussed the evolutions of self-driving machine. In fact, using multiple examples of catastrophic exceptions that would lead to cars hitting humans, it seemed more and more important that the current state of computer vision quickly evolves to encompass meaning. While a human driver has a pretty good understanding of human behavior to predict the erratic movement of a child on a sidewalk, a machine could be trained to do an even better job, considering it has no bias, never gets tired or angry, knows no distraction and can use additional sensor, like heat detection, to ‘see’ if the child is in a high emotional state (tantrum).

One aspect, however, that wasn’t mentioned during the first or second day was ethics. While the IoEyes might be just around the corner and sensors everywhere, analyzing everything from our emotions to our pulse, we still need to figure out how is all this information used and by whom. At the pace of technology, those are decision currently being made by companies whose only moral guidelines are growth and profit. A good(?) example was seen during day two at the startup competition where one company claimed to be able to identify potential terrorist using facial recognition, straight out of eugenics guide book.

Day two of the summit allows everyone to take a well deserve break from Naural Networks schema and graphs by replacing them with a broader understanding of how visual content impacts our society, either via stills or videos. Rich from new fields of expertise analyzing how human react to content – mostly driven by advertising’s need for more precise data – academia, as well as artists, have a much broader range of tools. From companies like Olapic, whose uses a proprietary Picturank engine to understand engagement, to start-ups like Picasso Labs, who extracts patterns in popular images, machines are helping humans understand taste, interaction, and social trends via visual content. It is a key to better defining storytelling and how iconic images affect our world, as explained by NYU Lauren Walsh’s research.

Day two reveals how much human insight is key in the evolution of Visual Technology. As much as the first day demonstrate how increasingly powerful are the result from machine crunched data, the second day reveals how much human insight comes into play to manage and make significant sense of all this data. And this is probably the greatest strength of the LDV Vision Summit. By gathering such a priori eclectic crowd ( scientist, researchers, founders, investors, students, artists) around one uniting theme for two days, it builds a community of like-minded people who had previously no idea each other existed. Beyond the deals that are made, the summit continuously builds bridges between individuals and companies who love to exchange ideas. Beyond the fascinating sessions, there is the serendipity and the inspirational networking that leaves everyone wanting more. Until next year.

You can read individual interviews of some of the panelists here: http://kaptur.co/category/ldv-summit/

——————————————–

You can sign up to the LDV Vision Summit Newsletter and get a least 70% early bird discount tickets for next year’s Summit!: http://www.ldv.co/visionsummit

——————————————–

Author: Paul Melcher

Paul Melcher is a highly influential and visionary leader in visual tech, with 20+ years of experience in licensing, tech innovation, and entrepreneurship. He is the Managing Director of MelcherSystem and has held executive roles at Corbis, Gamma Press, Stipple, and more. Melcher received a Digital Media Licensing Association Award and has been named among the “100 most influential individuals in American photography”

1 Comment