Users have been warned multiple times by terms of usage and public reports. Facebook, Instagram, Whatsapp, Snap, TikTok, Youtube, Twitter, Pinterest have made it very clear that in exchange for allowing you to use their platform to share your visual content, you grant them rights to do pretty much anything they want with it. And they do. From using them to train AI categorizers, extract social and behavior models, fine-tune targeting algorithms, nothing is out of reach. For the most part, it has been accepted. Consumers have settled into the idea that they pay for the service by giving away a little bit of their privacy.

The recent revelation that Apple will proactively scan visual content on users’ iPhones and uploaded to its iCloud service to detect child pornography just profoundly rattled this status quo. The proposed system presented to the researcher and called “neuralMatch” plans to use hashing to compare local content to an existing database of know child pornography images. In case of repeated positive matches, the system would notify a team of humans who can decrypt the images to confirm their content.

Apple’s announcement reads: “Apple’s method of detecting known CSAM (child sexual abuse material) is designed with user privacy in mind. Instead of scanning images in the cloud, the system performs on-device matching using a database of known CSAM image hashes provided by NCMEC (National Center for Missing and Exploited Children) and other child safety organizations. Apple further transforms this database into an unreadable set of hashes that is securely stored on users’ devices.” More detailed information here.

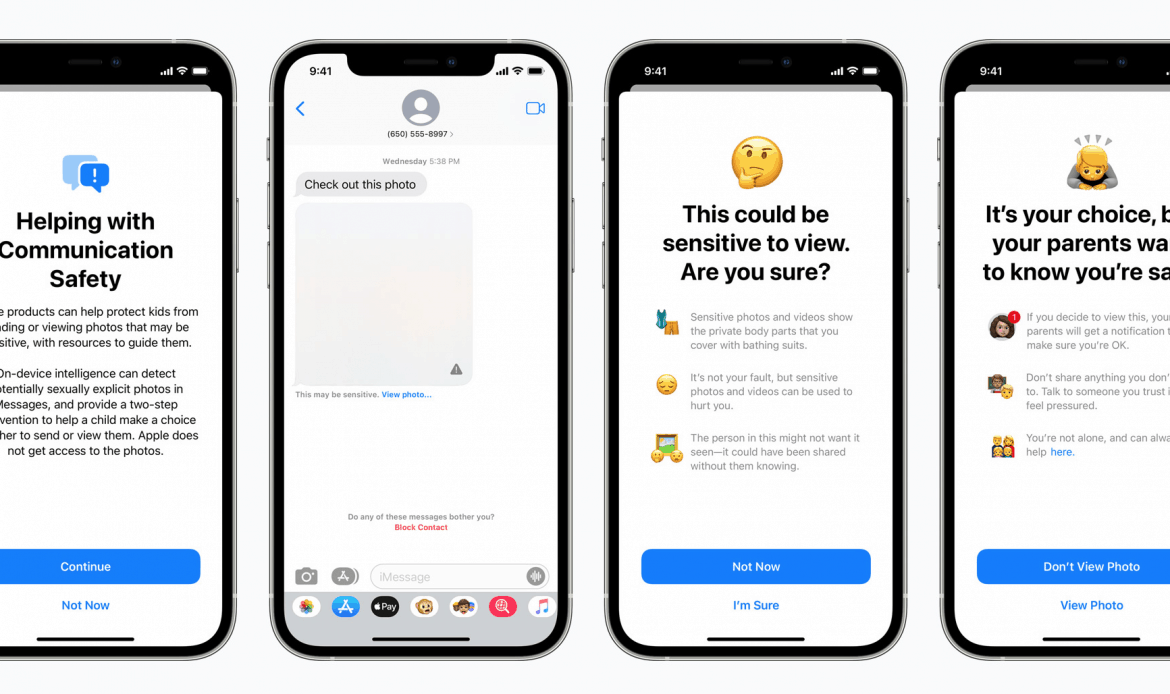

Along with the scanning of images, Apple will deploy a child pornography detector for its messaging app: ” When receiving this type of content, the photo will be blurred, and the child will be warned, presented with helpful resources, and reassured it is okay if they do not want to view this photo. As an additional precaution, the child can also be told that to make sure they are safe; their parents will get a message if they do view it. Similar protections are available if a child attempts to send sexually explicit photos. The child will be warned before the photo is sent, and the parents can receive a message if the child chooses to send it.”

Similar to the scanning process, this detection tool will reside locally.

The intent is commendable. The process is not.

In this case, there is no willing consent in exchange for services. An iPhone is a device that is wholly owned via a commercial purchase and is expected, as such, to be entirely and solely under the exclusive control of its owner. There is an expectation of privacy of content for every item entered and stored, be it contact list, text messages, notes, as well as photos and videos. NeuralMatch is a clear violation of this legitimate expectation.

Obviously, the main concern is not about the detection of child pornography but the tool’s capability. Governments could easily use it to identify and locate anyone they wish to censor. A simple process of adding image fingerprints to the filters would transform it into a formidable repressive weapon.

However, the issue is wider. Apple’s proposal is a clear confirmation of how the tech industry perceives its user’s content. Empowered by the total absence of revolt in the face of a complete and constant abuse of rights, major tech companies freely continue to bulldozer into what little remains of individuals’ privacy. The message is clear. Even after a buy-in, the product or service is still under the property and control of the manufacturer. It is the landlord economy.

Software upgrades become a justification for a redefinition of rights and, for many EOMs, a power grab. How long before Tesla decides to monitor everything happening in a car, reporting on “suspicious” conversations? How far are we before Google starts reporting on “suspicious” search patterns? Will Canon or Nikon follow Apple’s lead and have on-device content detection/reporting tools monitoring every image taken?

There is a cost linked to every decision we make. If we are wise, it’s a benefit. While there is full legitimacy in deploying appropriate tools to fight child pornography, those should not include the trespassing of rights of private images. It should not include the tools we use in our daily lives to be turned against us for purposes decided by others.

The feature is rolling out later this year on iOS 15, watchOS 8, iPadOS 15, and macOS Monterey.

Main image: Photo by Brandon Romanchuk on Unsplash

Update from Apple :

Update as of September 3, 2021: Previously we announced plans for features intended to help protect children from predators who use communication tools to recruit and exploit them and to help limit the spread of Child Sexual Abuse Material. Based on feedback from customers, advocacy groups, researchers, and others, we have decided to take additional time over the coming months to collect input and make improvements before releasing these critically important child safety features.

https://www.apple.com/child-safety/

Author: Paul Melcher

Paul Melcher is a highly influential and visionary leader in visual tech, with 20+ years of experience in licensing, tech innovation, and entrepreneurship. He is the Managing Director of MelcherSystem and has held executive roles at Corbis, Gamma Press, Stipple, and more. Melcher received a Digital Media Licensing Association Award and has been named among the “100 most influential individuals in American photography”