While damages are very real and can go far beyond a bruised reputation, companies and people are left unprotected against deepfakes and synthetic media. Only a handful of companies offer solutions to this growing threat. Sensity (ex-Deeptrace labs), a startup based in the Netherlands, offers to change that. We discussed with co-founder and CEO Giorgio Patrini on how it works :

A little about you, what is your background?

My background is in academia. I hold a Ph.D. in machine learning from the Australian National University. Following my Ph.D., I conducted post-doctoral research on deep learning, privacy, and encryption for learning systems and, in my last research appointment at the University of Amsterdam, on generative models. Generative models are used in Artificial Intelligence for automating synthesis of artificial data, for example, photo-realistic images, text to speech, voice cloning, and face-swapping in videos.

Societal implications from new technology have always been a personal interest. In 2018 we were at the tipping point: technologies and tools for faking a person’s appearance in audiovisual media, by their face or voice, started to become easy to use for non-experts, and widely utilized for malicious applications online— what the mainstream press popularised as “deepfakes”. My co-cofounder and I realized the need for new defensive solutions to respond to a problem that was only going to grow unless stopped, hence Sensity was born. Previously, I had also co-founded a startup called Waynaut back in my home country of Italy, which was acquired in 2015.

What is Sensity? What does it solve?

Sensity’s mission is to provide customers with solutions for detecting, monitoring, and countering threats posed by deepfakes and other forms of malicious visual media. As our research (See State of Deepfakes 2019) has shown, the rapid evolution of deepfakes poses a range of serious threats that would previously have required highly sophisticated technology and resources to execute. These threats range from fake defamatory content targeting individuals to corporate reputation attacks and enhanced social engineering. As the world’s first visual threat intelligence company, Sensity provides customers with the tools to detect and intercept these threats.

What can you do when a deepfake is detected? There are no laws really to handle deepfakes or manipulated media. Will it be up to your customers to create a follow-up action? What will it look like?

Depending on the nature of the deepfake there are several approaches for getting content removed. Appealing to harassment or illicit use of one’s personal image is often enough to get content taken down. Sometimes infringement is clearly related to copyright of a content producer. When we face a tough case with non-collaborative third parties, we can still have those underground websites blacklisted by search engines. We offer these services via legal tech partners in our networks. A crucial resource that we are uniquely positioned to provide is realtime intelligence: as soon as fake content compromising the reputation of an individual is uploaded online, we inform the relevant stakeholders. It is then at the customer’s discretion whether they use our takedown services or work with their legal team.

Let’s talk about the marketplace. Who will be your typical customer? And why?

We receive significant interest daily from a wide range of sectors and individuals who are primarily concerned about how deepfakes could impact their operations and reputation.

Some of our most concerned customers include key players from the entertainment sector whose clients are already being targeted by deepfakes, brands who are concerned about deepfakes being used to attack their reputation or security operations, and organizations who are looking to prepare for a new form of disinformation attack. All of our potential customers have one thing in common: Visual media plays a significant role in informing their public perception and day to day operations.

Everyone talks about the huge damages that can be brought by deepfakes and manipulated images. However, there are little or no companies, besides yourself, offering a solution. Why is that?

One of the reasons Sensity is the first company to provide a solution to market is our awareness of the threats back in early 2019. We started building out our monitoring capabilities and conducted extensive threat intelligence research to better understand who are the bad actors developing tools for creation, how they are selling services or monetizing on fake videos, and how deepfakes spread online. Threat modeling is fundamental when you are building a security product because you need to understand who your adversaries are and what motivates them. Most of the public discussion around deepfakes has not explored this angle of the question. Competition has started though — and as deepfakes continue to develop, I am confident other companies will follow to address growing market needs.

Deepfakes are usually associated with news. But what is the potential financial damage of deep fakes for brands?

Deepfakes enhancing disinformation is certainly a key concern that has generated a lot of media attention, but we currently see the threats to brands as more tangible and easier to orchestrate. We witness deepfake activity surrounding C-suite executives and many brand ambassadors, such as influencers and Internet personalities, on a daily basis. When you consider how a deepfake of a CEO or CFO can impact share prices or corporate reputation, the potential for damage is significant. Our threat intelligence platform is designed with these concerns in mind to help intercept and alert customers that could be paying a high price if compromising videos circulate widely.

What is the business model? Are you planning to deliver an app for everyone to use or offer a SaaS, API for business to integrate?

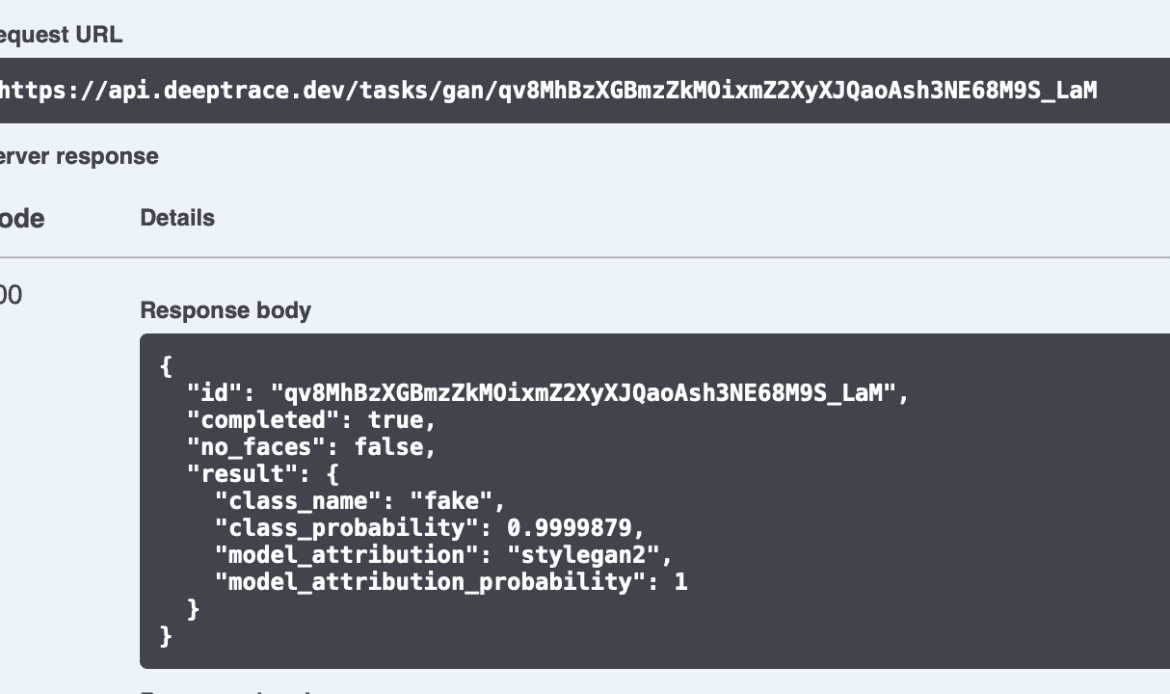

We create value for users in two different ways, depending on the customer’s needs. The first of these is detection capabilities via a REST API. You send a URL or a file containing videos or images to our cloud service, and we return a report containing automatic tests to find traces of synthesis AI tools, along with confidence scores.

This API approach is powerful as it can be easily implemented within customers’ existing flows of visual content that need analysis. The second point of value addresses the distinct problem of a customer not knowing where compromising videos of themselves or related to their organization could surface on the Internet.

Our threat intelligence platform covers this need by continuously monitoring hundreds of sources, including underground hubs and the dark web, to find compromised and potentially fake content aligned with the customer’s criteria. The customer is then alerted automatically when this content is identified. Threat intelligence is offered as a subscription to our platform, with additional services for mitigation via content takedown.

How do you measure success with Sensity? Numbers of deepfake detected? How do you know you found every instance?

Success for Sensity is about ensuring that people and organizations have the tools to reliably detect deepfakes and other visual threats. From the user perspective, it means that they will not deal with personal visual threats coming up to them unexpectedly — because we would have notified them instantly, and mitigated those threats by takedowns.

One objective of threat research is the discovery of new sources, communities, and actors that are likely to spread deepfakes online. It’s hard to estimate what the actual recall of our monitoring system is because there is no benchmark today. We were the first to study those trends from late 2018 and there is still no research providing a direct comparison.

Deepfakes are notoriously hard to detect and evolving. What makes your technology foolproof?

I think this is perhaps the wrong way to frame thinking about detection technologies. Just as no spam filter or anti-malware software is 100% foolproof, nobody can claim that a deepfake detection solution will be able to work in every possible scenario. This is particularly the case when trying to detect “zero-day vulnerabilities”, i.e. new creation tools unseen online before. The reason is the adversarial dynamics of these security problems, where bad actors will keep improving their tools in an attempt to bypass any detection system that has been put in place.

Still, our threat intelligence informs us that only a handful of tools, and variations on those tools, produce the vast majority of deepfakes we see online today. In practice, this means we can achieve high performance in detection. The rapid evolution of synthetic media means we remain vigilant to these changes, and our threat intelligence research keeps track of them both in terms of cutting edge new approaches and changes to the open-source landscape. This gives us confidence when detecting an attack as the landscape continues to evolve.

What would you like to see Sensity offer that technology cannot yet deliver?

One area our threat research has highlighted as significant for the near future is live facial reenactment being used for impersonation on video calls. In the last few months, we have identified several new tools for realtime face-swapping over Zoom, Skype, Microsoft Teams, and other video conferencing tools. With those, you can join a call by appearing with someone else’s face. This is particularly pertinent during the current pandemic where the vast majority of meetings are held over video conferencing platforms, including those that relate to the disclosure of highly sensitive information and financial operations. We will be exploring detection approaches to this in the near future.

Author: Paul Melcher

Paul Melcher is a highly influential and visionary leader in visual tech, with 20+ years of experience in licensing, tech innovation, and entrepreneurship. He is the Managing Director of MelcherSystem and has held executive roles at Corbis, Gamma Press, Stipple, and more. Melcher received a Digital Media Licensing Association Award and has been named among the “100 most influential individuals in American photography”

1 Comment