Trust in visual content has never been about pixels. It has always been about people. A new generation of tools is finally making that connection verifiable, but only if we understand what problem we are actually solving.

There is a number that should have changed everything. In 2018, Imatag analyzed 50,000 images across 750 websites and found that 80% of them had their metadata stripped. Gone. Creator name, copyright notice, the publication it came from, the photographer who made it, all of it, erased in transit. Not by malicious actors. Not by sophisticated attacks. Just by the ordinary mechanics of the internet: compression, platform upload pipelines, format conversion. The information that was supposed to tell you who made an image and where it came from simply didn’t survive the journey.

The industry knew. It had known for years. And it kept building on top of the same fragile metadata infrastructure anyway.

That is where this story begins, not with artificial intelligence, not with deepfakes, not with the latest regulatory anxiety in Brussels, New Delhi, or Sacramento. Those are symptoms. The actual disease is older, quieter, and more fundamental: we never had a reliable way to connect an image to the person or organization responsible for it.

Trust in visual content has never been about pixels. It has always been about people.

This matters more than most technical discussions acknowledge. Because the question audiences actually ask , consciously or not, when they encounter a photograph or a video is not “is this real?” It is: can I trust this? And trust is not a property of pixels. It is a property of relationships. You trust an image from Reuters because you trust Reuters. You trust a product photograph on a brand’s website because you trust the brand. You trust a photograph from a photographer you have followed for years because you trust them. The image is a conduit. The trust flows through it toward whoever made it.

That distinction has enormous consequences for how we think about every technology in this space. An AI-generated image from a brand you trust is trustworthy. A perfectly unmanipulated photograph from an unverifiable source is not. The question is never about the pixels. It is always about the provenance and specifically, about whether that provenance can be traced to a human being or organization you have reason to believe in.

The Metadata Failure Nobody Fixed

Digital image metadata, the IPTC standard fields that have embedded creator names, copyright notices, and captions in photographs since the 1990s, was never designed to be trusted. It was designed to be carried. The information was there if you looked for it and if nothing had stripped it. The standard was a vocabulary, not a verification system. Anyone could write anything in those fields. Nothing prevented it. Nothing flagged it when it happened.

This is worth sitting with for a moment. The entire infrastructure of image attribution in professional photography, the system that newsrooms, stock agencies, and publishers built their workflows around, was, at its foundation, an honor system. You claimed to be the creator. Publications assumed you were. No verification. No cryptographic binding. No way to know if the caption had been altered between the camera and the publication.

Imatag’s 2018 finding was not a surprise to anyone who had been paying attention. The IPTC had been sounding alarms about metadata stripping for years. The issue was structurally built into how the internet handles images: platforms optimize for speed and file size; metadata is weight they remove by default. The Copyright Office had documented it. Industry working groups had documented it. And the industry had, collectively, built nothing substantial to fix it.

Then generative AI arrived, and suddenly everyone discovered that provenance mattered.

The question audiences ask when they encounter a photograph is not ‘is this real?’ It is: can I trust this?

The impulse to frame AI as the origin of the provenance problem is understandable but wrong. AI did not create the problem. It revealed the inadequacy of the solution that had always been in place. When an AI-generated image can be dropped into any workflow with whatever metadata you choose to attach, the weakness that was always there becomes impossible to ignore.

The good news, and it is genuinely good news, is that the field is now building infrastructure that is fundamentally different. Cryptographic binding. Verifiable identity. Signed provenance chains that travel with content and cannot be altered without detection. The tools are real, and they are already in cameras, editing software, and publishing pipelines. They are imperfect, contested, and in some cases genuinely competing with each other. But the direction is right.

What the New Tools Are Actually Doing

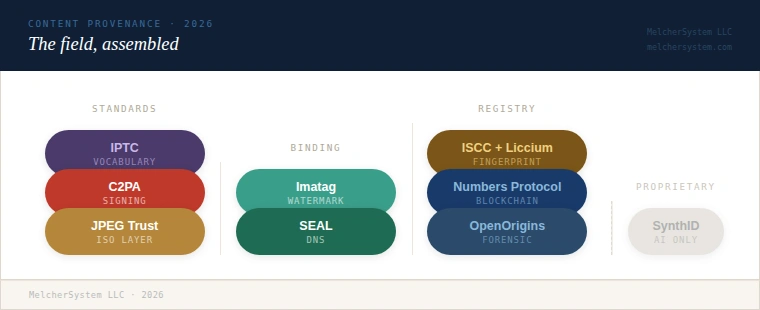

The landscape of provenance and authenticity tools can look bewildering from the outside: acronyms, standards bodies, competing protocols, blockchain projects, digital watermarking companies. But most of them are trying to solve one of three connected problems, and understanding which problem each one addresses makes the picture considerably clearer.

The first problem is binding:

How do you keep provenance information connected to the content it describes?

The internet is very good at separating them. As Imatag’s research showed, embedded metadata does not reliably survive the journey. Imatag’s own invisible watermarking technology addresses this directly, embedding a signal in the pixel data itself that survives compression, cropping, resizing, and even screenshots. The watermark becomes the persistent thread back to the provenance record, even after all conventional metadata has been stripped. This is what the C2PA standard calls soft binding: a fallback layer that keeps a content credential retrievable even when the manifest has been lost. Imatag is one of the key implementations of the C2PA soft binding algorithm and has worked directly with news agencies such as AFP on newsroom deployments.

The second problem is verification:

How do you know the provenance information is accurate, and that the person claiming to have created the content actually did?

This is where most metadata standards have always failed. The new generation of tools uses cryptographic signing — the same mathematical foundation that secures online banking — to bind provenance claims to a verified identity. When an image carries a valid C2PA Content Credential signed by Reuters, you cannot alter that credential without breaking the cryptographic seal. You know it came from Reuters because the signature proves it, not because Reuters wrote their name in a text field.

The third problem is attribution:

Who is actually being verified, and by what authority?

This is the deepest and most contested question in the space, and it is where the most interesting divergence between approaches exists. Different systems answer it differently, and the differences are not merely technical.

The Players

The dominant framework is C2PA, the Coalition for Content Provenance and Authenticity, a standard developed under the Linux Foundation, with founding members including Adobe, Microsoft, the BBC, Intel, and Truepic. C2PA defines how provenance information is structured, signed, and embedded in media files. Its consumer-facing implementation is called Content Credentials, and it is now integrated into Adobe’s Creative Cloud tools, OpenAI’s and Google’s image generation, major camera manufacturers including Nikon, Leica, Sony, and Canon, and is being rolled out across newsrooms globally. The Steering Committee has since expanded to include Amazon, Google, and Meta, among others. When you see the small “cr” icon appearing on images in LinkedIn, that is C2PA.

C2PA’s approach to attribution relies on the same certificate infrastructure that secures HTTPS connections, commercial Certificate Authorities that verify the identity of organizations before issuing signing certificates. It is proven at scale and broadly trusted. It is also not without critics.

The most technically rigorous of those critics is Dr. Neal Krawetz, a digital forensics researcher who writes under the name Hacker Factor and has published an exhaustive 27-page critique of C2PA’s specification. His alternative, called SEAL (Secure Evidence Attribution Label), uses DNS-based signing rather than commercial certificate authorities, making it free to implement, privacy-preserving by design, and, in his analysis, compliant with the Federal Rules of Evidence in ways that C2PA is not. SEAL is fully implemented and functional, but has yet to achieve major platform adoption. It is now being formally evaluated alongside C2PA by the Provenance and Authenticity Standards Assessment Working Group at the University of Maryland.

At a different point on the architectural spectrum, Liccium , built on the ISO-standard ISCC (International Standard Content Code), takes a fundamentally different approach to binding: no embedded metadata, no watermarks. Instead, a fingerprint is derived algorithmically from the content itself and registered in a federated public registry, where creators can attach signed declarations of rights, identity, and provenance. The fingerprint cannot be stripped because it is not in the file, it is the file, mathematically speaking. This makes Liccium particularly powerful for rights management, AI training opt-out, and managing provenance across large content inventories that move through many hands.

Sitting above C2PA in the standards hierarchy is JPEG Trust (ISO/IEC 21617) , the formal ISO layer built explicitly on and extending the C2PA engine. Published as an international standard in January 2025 by the Joint Photographic Experts Group, it covers the same ground as C2PA — provenance, authenticity, integrity, and intellectual property rights — but with formal ISO standing and native compatibility with the entire JPEG family of formats: JPEG 1, JPEG 2000, JPEG XL, JPEG XS, and JPEG AI. Since JPEG images represent the overwhelming majority of visual content in circulation, that format’s universality is not a small advantage. Its multi-part structure is revealing: Part 1 (Core Foundation) is published; Parts 2 (Trust Profiles — structured scenarios for assessing trustworthiness in specific deployment contexts), 3 (watermarking as a binding mechanism), and 4 (reference software) are in development. Part 3 will make JPEG Trust a direct interoperability layer with pixel-level watermarking tools. A second edition of Part 1 is already in the approval phase. JPEG Trust is not a competitor to C2PA, it is what happens when the international standards body formalizes what C2PA started.

Then there is OpenOrigins, whose Human-Oriented Proof System (HOPrS) ,now a Linux Foundation Decentralized Trust open-source lab, takes a forensic approach: it maps exactly where, at the pixel-quadrant level, an image has been edited compared to its registered original. Not a provenance chain. Not a signature. A visual proof of what changed and where.

And underneath all of it, functioning as the foundational vocabulary that every other system either builds on or must account for: IPTC, the standard that has defined creator, copyright, and caption fields in professional photography for three decades. IPTC is not an authentication system. It never was. But it remains the common language of image attribution, and the new generation of tools is increasingly designed to carry IPTC fields inside cryptographically signed structures, giving the old vocabulary a new security layer.

The Thing Nobody Wants to Say

There is a tendency in this space to talk about provenance technology as a solution to the AI problem: a way to label synthetic content so audiences can distinguish it from real photography. That framing is not wrong, exactly, but it is considerably narrower than what these tools actually offer, and it misses something important.

The question of whether an image was made by a camera or a generative AI model is a subset, a specific case, of the larger question of whether you can trust it. And trust does not derive from pixel origin. It derives from the credibility of whoever is responsible for the image.

A brand that uses AI to generate product imagery and signs it with verified credentials is being more transparent and more trustworthy than a brand that uses photographers but strips all metadata. A news organization that signs its photographs with C2PA credentials and maintains an unbroken provenance chain from camera to publication is giving its audience something it could never give them before: not just the claim that an image is authentic, but the means to verify it.

The question is never about the pixels. It is always about whether the provenance can be traced to a human being or organization you have reason to believe in.

That is genuinely new. For thirty years, the answer to “how do I know this image is what it claims to be?” was: you trust the publication, and the publication trusts its photographers, and the photographers trust their own files, and somewhere at the bottom of that chain is an honor system. Now the chain can be cryptographic. Now it can be verified, not assumed.

This does not eliminate the need for judgment. A valid C2PA signature proves that an image was signed by the organization whose name appears on it. It does not prove that the organization is telling the truth about what the image depicts. Provenance and accuracy are different things. But provenance is the precondition for accountability. You cannot hold someone responsible for a false image if you cannot prove it came from them. Now you can.

Where This Is Going

The tools described here are not waiting for adoption. It is already underway. Cameras from Nikon, Leica, Sony, and Canon are shipping with C2PA at the time of capture. Adobe’s export pipeline embeds credentials by default when the feature is enabled. AFP and Imatag have a live deployment covering news photography workflows. The C2PA Conformance Program was soft-launched in June 2025 at the Content Authenticity Summit at Cornell Tech, creating the first formal trust list for organizations signing content credentials. California’s AB 853 will require new recording devices to offer provenance data options from July 2026.

The gaps are real too. Platform adoption is uneven; not every social network preserves credentials. Verification requires tools that most audiences don’t yet have access to. The attribution layer , the question of who certifies the certifiers, remains genuinely contested. And the philosophical gap between what these systems can prove (provenance) and what audiences want to know (truth) will not be closed by technology alone.

But the direction is unmistakable. The industry is building, for the first time, a trust infrastructure for visual content that is verifiable rather than asserted. That works not because pixels can prove themselves, but because the people and organizations behind the pixels can now prove who they are and be held accountable for what they sign.

That is what authenticity in the AI era actually looks like. Not a detection algorithm. A verified human signature.

GO DEEPER

Securing the Visual Record: A Technical and Philosophical Guide to Content Provenance

The full companion study with complete solution profiles, the four-layer framework, interoperability analysis, governance implications, and sourced references, is available as a free PDF download below:

Download the report at kaptur.co/provenance-report

Full Disclosure & Transparency

This guide is an independent technical analysis provided by MelcherSystem LLC.

- Professional Affiliations: The author is a early member of the Content Authenticity Initiative (CAI), a contributor to the

AI and Multimedia Authenticity Standards Collaboration, and contributes to the development of C2PA standards. MelcherSystem provides strategic consulting services to several organizations mentioned in this report, including Imatag. - Editorial Independence: All assessments, comparisons, and technical frameworks presented are the result of independent research. No compensation was received from any mentioned protocol or vendor specifically for inclusion in this article.

- AI Disclosure: Consistent with C2PA principles, this article was authored by Paul Melcher with technical assistance from AI for data synthesis and formatting. All claims and technical specifications have been human-verified for accuracy.