The AI product imagery industry has a favorite word: efficiency. Every pitch deck, every tool landing page, every case study opens with the same promise: faster, cheaper, more. Photoroom will generate your lifestyle shots in seconds. Pebblely will give you 40 background variations before lunch. Adcreative.ai will instantly turn simple garment photos into high-end AI fashion photoshoots. The math is always about subtraction: subtract the photoshoot, subtract the retouching, subtract the weeks of lead time. What remains, they assure you, is pure margin.

And the math works. Nobody disputes it. An AI-generated product image costs between $1 and $ 10. A traditional studio shoot costs $500 to $2,000 per product. For a catalog of a thousand SKUs, we’re talking about the difference between a small subscription fee and a mid-six-figure production budget. When MANGO rolled out AI-generated imagery on its product detail pages last year, the logic was transparent: why would a brand selling fifteen-euro shorts invest in the same production infrastructure as a luxury house?

The cost story is real. But it’s also the only story anyone is telling. And that should make us uncomfortable.

Because here’s the question nobody in the AI imagery ecosystem seems eager to answer: Does it actually sell more?

Not “does it look good enough.” Not “can shoppers tell the difference.” Does the AI-generated product page, the one that cost you three dollars instead of three thousand, actually convert at the same rate? Does it drive the same average order value? Does it produce the same return rate? Does the customer who buys from an AI-rendered lifestyle shot come back at the same frequency as the one who bought from a photograph?

Nobody knows. And the remarkable thing is that nobody seems to be trying to find out.

The Feedback Loop That Doesn’t Exist

I went looking for independent data on this. Rigorous A/B tests comparing AI-generated product imagery against traditional photography, measured on actual conversion, actual revenue, and actual return rates. Conducted by someone who doesn’t sell AI photography tools.

What I found instead was an ecosystem of vendor-produced claims. Photoroom cites statistics about AI adoption. Hippist AI points to a single client case study. An Entrepreneur.com feature tells the story of “Sarah,” whose home décor store saw a 23 percent lift in conversions, alongside what reads unmistakably as a sponsored placement for an AI image generator.

The pattern is consistent. The tool vendors measure what they produce: images generated, time saved, and cost per asset. They do not measure what those images accomplish. And more importantly, they are structurally incapable of doing so, because the feedback loop simply doesn’t exist inside their products.They are production instruments marketed as growth instruments, and no one is asking for a receipt.

The analytics infrastructure that could answer these questions, A/B testing platforms, conversion tracking, and attribution modeling, exists. And the fact that it lives in separate tools isn’t inherently a problem. Specialized analytics platforms are arguably better read by the experts who operate them. The problem is that nobody has built the bridge. There is no shared data layer, no closed loop, no feedback mechanism connecting the moment of image creation to the moment of purchase. The AI image tools don’t talk to the analytics tools.

There’s an irony here worth pausing on. These are companies that have built their entire value proposition on the power of AI to transform creative workflows. They use machine learning to generate images, remove backgrounds, simulate lighting, and predict aesthetic preferences. And yet none of them have turned that same AI capability inward, toward measuring whether their own output actually works. The cobbler’s children, as they say, go barefoot.

This is the traceability gap.

The closest thing to independent evidence comes from a Stylitics and Aha Studio survey of 411 shoppers, which found that 71 percent couldn’t distinguish AI-generated fashion imagery from traditional photography. That’s a perception study, not a performance study. Knowing that shoppers can’t tell the difference tells you nothing about whether they buy at the same rate, return at the same rate, or trust the brand at the same level over time. The study also comes from a company that sells AI styling tools, which doesn’t disqualify it, but doesn’t exactly make it a disinterested party either.

So we’re left with a market that has enthusiastically adopted AI imagery on the strength of a cost argument, while remaining almost entirely in the dark about whether the revenue side of the equation holds up. The tools save money, yes, undoubtedly. Whether they make money is, at best, an open question.

The Consumer Is Not Getting Tired of Caring

Maybe all this won’t even matter. There’s a comforting narrative circulating in marketing departments: consumers will get used to AI-generated content. Disclosure fatigue will set in. People will stop asking whether an image is real, the way they stopped asking whether a phone photo was filtered. Give it time, and the whole authenticity question dissolves.

The data says otherwise.

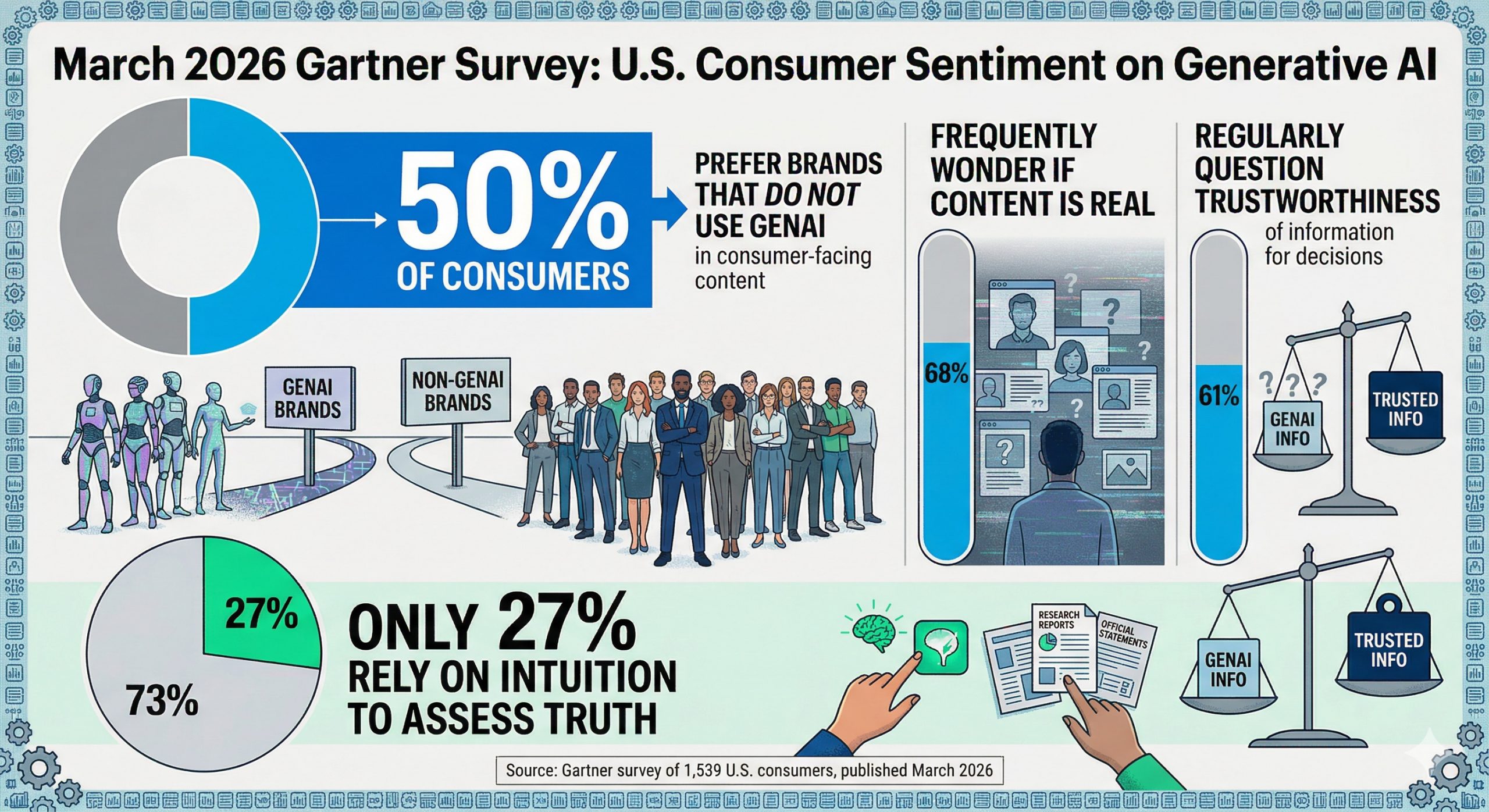

A Gartner survey of 1,539 U.S. consumers, published in March 2026, found that half of consumers would prefer to do business with brands that do not use generative AI in their consumer-facing content. Not a fringe minority: half. And the skepticism is deepening, not fading: 68 percent said they frequently wonder whether the content they encounter is real, and 61 percent regularly question whether the information they rely on for decisions is trustworthy. Perhaps most striking, only 27 percent of consumers still rely on intuition to assess whether something is true. The rest have shifted to active verification: checking sources, cross-referencing, and looking for proof.

That behavioral shift has a direct cost for brands. When a consumer pauses on a product page to investigate whether the image is real, when they reverse-image-search a lifestyle shot, when they check the return policy more carefully because something looks “too perfect”, each of those moments is friction. And friction, in e-commerce, is conversion loss. The Stylitics/Aha Studio study found that 37 percent of shoppers check return policies more carefully when they know an image is AI-generated. That’s not exhaustion. That’s caution. This is the opposite of normalization. And it has a price tag that never shows up in the AI tool’s dashboard.

A separate study from the Nuremberg Institute for Market Decisions found that when consumers are told content is AI-generated, they respond with measurably more skepticism and less engagement, even when the content itself is technically polished. The researchers called it a “trust penalty”: a bias that kicks in the moment someone learns a machine made what they’re looking at. Transparency, they concluded, reveals the problem without solving it.

The assumption that people will simply acclimate to synthetic imagery misreads how deception works. A lie doesn’t become acceptable through repetition. Repeated deception doesn’t produce indifference; it produces deeply anchored distrust. And distrust, once established, is corrosive. It doesn’t just affect the brand caught using AI imagery without disclosure. It contaminates the entire visual environment. When shoppers start wondering whether any product image is real, every brand pays the price, including the ones still shooting with cameras.

The Label and the Artwork

This brings us to the peculiar nature of what product imagery actually does. A product photograph has always served two masters. It describes, this is what the object looks like, this is its color, its texture, its scale. And it inspires, this is how the object will make you feel, this is the life it belongs in, this is the version of yourself it promises.

The descriptive function is straightforward. It benefits from clarity, consistency, and accuracy. AI handles this well, and may even improve on it: perfectly lit, perfectly angled, perfectly consistent across a thousand SKUs. For flat-lay catalog shots and white-background packshots, the argument for AI is strong.

The aspirational function is something else entirely. Aspiration depends on emotional resonance, on distinctiveness, on the feeling that someone made a creative choice about how to present this object. It feeds on the kind of specificity that comes from a photographer deciding that this angle, in this light, at this moment, tells the story. When every AI tool draws from the same training data and converges on the same statistically optimized visual language, aspiration starts to flatten. The images look competent and feel like nothing.

And here’s where the traceability gap becomes dangerous. Because without closed-loop measurement, brands can’t distinguish between these two functions. They can’t see that their AI-generated packshots are converting fine while their AI-generated lifestyle imagery is quietly underperforming. They can’t detect that return rates have crept up because the aspirational promise exceeded what the physical product delivers, a problem that matters enormously when 22 percent of e-commerce returns already happen because the item looks different in person. The cost savings are visible. The revenue erosion is invisible. And the tools aren’t built to make it visible.

A New Category With New Metrics

Maybe the real problem is that we keep asking the wrong question. The industry frames this as a substitution: can AI imagery replace traditional photography? and then measures the answer in cost terms. But what if AI-generated commercial imagery isn’t a cheaper version of photography? What if it’s a different thing altogether, with its own economics, its own relationship to the consumer, and its own way of building or eroding trust?

A photograph carries an implicit claim: this object was in front of a camera. Someone was in the room with it. What you see is a mediated version of something that exists. An AI-generated image carries a different implicit claim: this is what the object should look like, according to a statistical model trained on what similar objects have looked like in the past. One is testimony. The other is prediction.

Both can be useful. Both can sell products. But they work differently, and pretending they’re interchangeable, while measuring only the cost side, is how you end up with an industry flying blind.

If AI-generated commercial imagery is its own medium, then it needs its own creative direction. Right now, most tools produce competent, interchangeable output because they draw from the same training data and optimize for the same statistical middle. The results look professional. They also look like everyone else’s. That’s a problem when your product page sits three clicks away from a competitor’s product page running the same tool with the same defaults.

The opportunity isn’t in brand guidelines. Matching every image to the brand’s hex colors and logo placement is not the answer. The real question is whether brands can inject genuine style into their AI pipelines. Custom visual models, trained on a specific aesthetic sensibility, that make a brand’s output as recognizable as a signature photographer’s work used to be. Think less “brand compliance” and more “visual voice.” And do the AI tools they use allow them to do so?

This is creating a new role: call it the AI stylist. Someone who doesn’t generate images manually but trains, tunes, and curates the models that do. Someone who understands how light should behave on silk versus cotton, how a room should feel for a Scandinavian furniture brand versus a Milanese one, how to encode the kind of specificity that keeps a product image from dissolving into the generic.

And, the people best equipped for this work are the ones the efficiency narrative has been written to displace. Photographers who spent decades learning how light and composition create desire. Stylists who know how a sleeve should fall, how a surface should catch a highlight, how a product should breathe in a frame. Their craft didn’t become obsolete when AI arrived. It became the scarcest input in a pipeline drowning in sameness. The accumulated judgment of thousands of shoots is precisely the training signal a custom model needs, and precisely what no amount of prompt engineering can replicate.

The irony would be poetic if it weren’t so practical: the future of AI-generated imagery may depend on the people it was supposed to replace.

Author: Paul Melcher

Paul Melcher is a highly influential and visionary leader in visual tech, with 20+ years of experience in licensing, tech innovation, and entrepreneurship. He is the Managing Director of MelcherSystem and has held executive roles at Corbis, Gamma Press, Stipple, and more. Melcher received a Digital Media Licensing Association Award and has been named among the “100 most influential individuals in American photography”