On March 10, the European Parliament voted overwhelmingly to demand a new licensing framework for AI training. Across the Atlantic, private data brokers are raising tens of millions to license content directly. Both approaches claim to solve the same problem. Neither does.

The Axel Voss resolution passed with 460 votes in favor, a commanding majority signaling that the EU has moved past the question of whether AI companies should pay for copyrighted training data and into the mechanics of how. The resolution calls for mandatory transparency, fair remuneration, retroactive compensation for past ingestion, and a territorial rule requiring any AI model operating in the EU to comply with European copyright standards, regardless of where the training occurred.

It reads like a victory for creators. In practice, it may be the beginning of a very expensive administrative experiment that delivers remarkably little to the people it claims to protect.

The problem is structural. The resolution leans heavily on Collective Management Organizations, the same institutions that have managed music royalties, private copying levies, and photocopy rights across Europe for decades. CMOs are a known quantity.

And what we know about them should give visual creators pause.

The European Model: Bureaucratic Friction and the Proxy Problem

The EU’s framework for collective licensing rests on Extended Collective Licensing, or ECL. Under ECL, a CMO is authorized by law to license works on behalf of all rightsholders in a given category, even those who never signed up. The idea is elegant: one blanket license, one payment stream, universal coverage. The Voss resolution wants the European Commission to build this infrastructure for AI training, with the EUIPO acting as a “trusted intermediary” to manage registries of licensing offers and opt-outs.

The resolution also introduces a rebuttable presumption: if an AI model placed on the EU market fails to meet the proposed transparency requirements, it is presumed to have been trained on protected works. The burden shifts to the developer. Genuine regulatory teeth…. if the body they’re attached to can chew.

The trouble starts with what happens after the money is collected.

CMOs have long been criticized for management fees that eat into creator payouts; 15 to 30 percent is common in European collecting societies. But the deeper problem is distribution. The Voss resolution demands itemized transparency from AI developers: a full list of every copyrighted work used in training. Assume, for the sake of argument, that this mandate is enforced and AI companies hand over complete ingestion logs. The CMO now has a list of billions of images. It still has to match each one to a specific rights holder. That requires a comprehensive visual rights registry, a database that connects content to creators at a scale never before built for photography or illustration. The resolution’s answer is to position the EUIPO as a “trusted intermediary” maintaining a centralized, machine-readable register of licensing offers and opt-outs. In practice, this means the EU is proposing to build, from scratch, the largest visual rights database in history.

For future training, this is at least structurally coherent, if expensive, slow, and dependent on mass creator registration. For past training, it collapses entirely. The resolution asks the Commission to examine compensation for content already inside deployed models, training data scraped years ago without persistent provenance, cryptographic metadata, or any record of which specific images went in. No transparency mandate can retroactively produce ingestion logs that were never created. For this historical debt, CMOs would have no choice but to proxy distributions, allocating payments based on estimates of market share rather than verified usage. The EU is building a redistribution system for a dataset it cannot inventory.

The American Model: The Private Data Club

The US has taken a different path, or rather, no path at all. There is no federal mandate for AI training licenses, no legislative framework for collective management, and no congressional appetite for creating one. The US Copyright Office’s May 2025 report on generative AI training acknowledged the potential for extended collective licensing but ultimately recommended letting the voluntary market develop on its own. It also flagged the elephant in the room: antitrust.

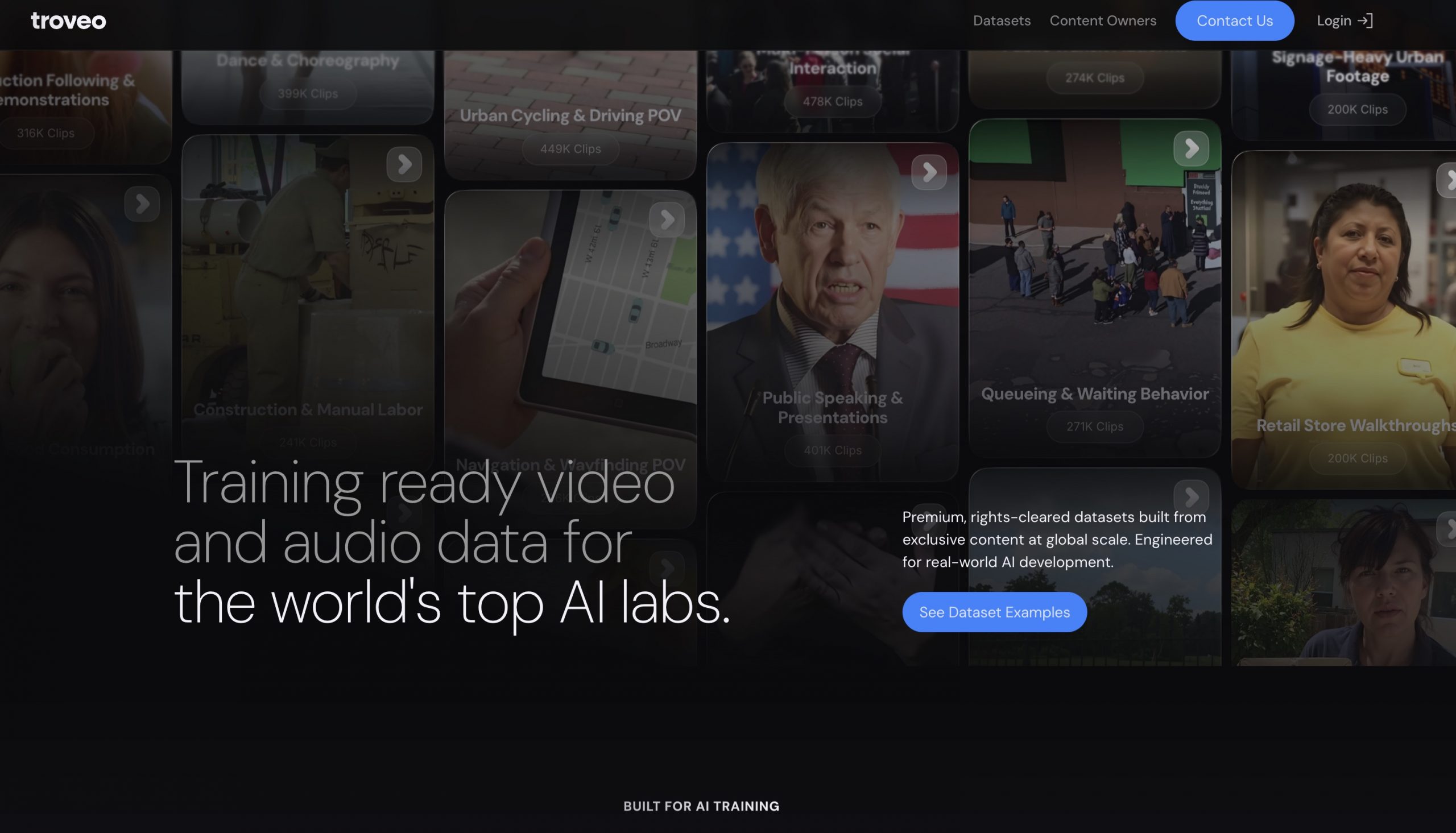

Into this vacuum have stepped private data brokers. Protege, founded in 2024 and now backed by $65 million, including a round led by Andreessen Horowitz, has positioned itself as the infrastructure layer between data owners and AI labs. It works with the majority of the Magnificent Seven, the dominant AI developers , to source licensed training data across healthcare, video, audio, and motion capture. Troveo, focuses specifically on video content, connecting filmmakers and creators with AI companies that need rights-cleared footage. By late 2025, Troveo reported more than $5 million in payouts to content owners and was ingesting 6,000 terabytes of video per month.

These are real businesses solving a real problem. They are also solving it for a very specific slice of the market.

Protege’s pitch to investors is instructive. The company aggregates “trusted, real-world data”, premium, curated, rights-cleared datasets in specialized verticals. The economics favor high-value, high-signal content: medical imaging, professional video archives, proprietary audio. The frictional cost of onboarding an individual photographer, managing micro-contracts, and routing micro-payments for each of the millions of ordinary images that constitute the bulk of AI training sets does not pencil out. There is no economic incentive for these platforms to serve the long tail.

Troveo is slightly more democratic; it lets individual creators upload footage and has built a network of over 2,400 content licensors, but its model is still organized around volume licensing of video content to a small number of AI buyers. The 350 million video clips in its library represent a genuine marketplace. The independent still photographer, the mid-tier stock contributor, the photojournalist, the influencer creator on Instagram, the fine art photographer, whose work was scraped years before any of these platforms existed, these creators remain outside the system.

The US private brokerage model is a tiered market exclusion dressed in the language of creator empowerment. And the venture capital behind it tells you who the real customer is. Protege’s investors include Andreessen Horowitz, the same firm that backs multiple major AI labs.

The Antitrust Trap

Could American photographers organize collectively to bargain with AI labs? In theory, yes. In practice, the Sherman Antitrust Act makes this extraordinarily dangerous.

Section 1 of the Sherman Act prohibits horizontal agreements that restrain trade. If independent visual creators formed an organization to set a baseline tariff for AI training data, they would be establishing a collective price floor, an arrangement the Department of Justice could prosecute as price fixing. This is a per se violation: no inquiry into reasonableness, no balancing of harms. The creators would be treated as a cartel.

The music industry has a workaround. ASCAP and BMI operate under consent decrees, court-supervised agreements that grant them antitrust immunity in exchange for regulatory oversight and rate-setting by a federal judge. These decrees are decades old, product of a specific legislative moment, and there is no equivalent legislation pending for visual content licensing. The US Copyright Office acknowledged the antitrust tension in its 2025 report but deferred to the DOJ for guidance on a possible exemption and to the FTC for enforcement. Neither agency has acted.

Without a congressional safe harbor, American creators face a catch-22. They can negotiate individually and be ignored because no single photographer has leverage against OpenAI’s legal department. Or they can organize collectively and risk prosecution. The AI labs benefit from this asymmetry. They operate with enormous consolidated market power. The creators they depend on are atomized by design.

There is an irony here. If the EU implements its ECL framework, US photographers whose work was ingested by models operating in Europe would be covered automatically, as individual beneficiaries of a foreign sovereign licensing scheme, not as members of a domestic cartel. They could register with European CMOs individually to improve their chances of accurate distribution. What they could not do is organize collectively on the US side to bargain as a bloc. The EU framework could inadvertently become the payment channel that American creators are legally prohibited from building for themselves.

The Squeezed Middle

Stand back and look at both systems together, and a pattern emerges. The EU builds a universal bureaucracy that collects from everyone and distributes through opaque proxies. The US builds a premium marketplace that serves the top tier and ignores the rest. In both cases, the independent creator, the photographer, the illustrator, the mid-career visual artist, the creator, falls through the gap.

The Voss resolution acknowledges this implicitly by calling for “voluntary sectoral licensing solutions” rather than a single mandatory framework. The Kluwer analysis is blunter: the report’s focus on licensing as the primary mechanism “seems premature, and other options should at least be explored.”

What options? The resolution mentions digital watermarking and technological standards for transparency, but only as supporting infrastructure for the licensing regime it already assumes will work. The US Copyright Office similarly gestures toward ECL as a potential remedy for market failure without confronting the fact that the market has already failed, for a specific, large class of creators who generate most of the visual content AI systems consume.

The UK: Threading the Needle, Missing the Eye

If any jurisdiction should be able to make licensing-first work, it is the United Kingdom. The UK has the strongest existing collective management infrastructure in the English-speaking world, a government that has publicly committed to rejecting broad text-and-data-mining exceptions, and a House of Lords committee that published a 180-page report on March 6 calling for exactly the framework the visual industry needs: licensing as the default, statutory transparency obligations for AI developers, and provenance standards attached to individual assets.

The political trajectory has been dramatic. The government initially proposed a commercial TDM exception with opt-out , essentially inviting AI companies to scrape freely unless a creator posted a “no trespassing” sign. The creative industries revolted. In January 2026, Secretaries of State Liz Kendall and Lisa Nandy acknowledged the government had been wrong to express a preference and declared a “reset.” The Lords committee went further: rule out the exception entirely, stop weakening copyright to attract US tech companies, and build a licensing ecosystem backed by enforceable transparency.

And then….nothing. The government’s 125-page copyright report, published on March 18 to meet its statutory deadline under the Data (Use and Access) Act, formally abandoned the opt-out TDM exception. But it replaced it with nothing. The report states that the government “will not introduce reforms to copyright law until we are confident that they will meet our objectives” and proposes instead to “gather further evidence,” “consider alternative approaches,” and “monitor developments” with no timeline for a final policy decision. On licensing, the government’s position is explicit: “We propose not to intervene in the licensing market at this stage.” On transparency: no legislation, just “best practice” guidance developed with industry. On enforcement: continued monitoring. On models trained abroad: wait for the Getty v. Stability AI appeal. The House of Lords asked for urgency and a licensing-first commitment. The government responded with a literature review.

What makes the UK case revealing is where the infrastructure actually exists and where it does not. The Copyright Licensing Agency was developing a gen-AI training license for text-based works. Publishers’ Licensing Services rolled out an opt-in collective scheme on March 11. These cover books, journals, and magazines, sectors with established CMOs, clear rights chains, and identifiable catalog data. The CLA’s membership does include visual content bodies – PICSEL for photographers, DACS for designers and artists, but neither has launched an AI training license. The text side moved first because it had the catalog infrastructure. The visual side faces fragmented rights chains, inconsistent metadata, and a volume of scraped images that dwarfs anything the existing system was designed to handle.

The Lords committee heard testimony about C2PA provenance standards, watermarking, and fingerprinting as the technical backbone for a licensing-first system. But the same committee acknowledged that EU opt-out mechanisms built on similar tools had failed to support a strong licensing market, calling the technical foundations “unreliable, patchy, and burdensome for individuals.” Government minister Chris Bryant put it plainly: finding a practical solution for individuals who are not part of a collecting society remains one of the core unsolved problems.

The UK, in other words, has the political will, the institutional history, and the regulatory ambition and yet, it still cannot close the loop on visual content. If the country with the strongest CMO tradition in Europe cannot build a functioning licensing system for AI-ingested images, the question is whether any licensing model can.

Beyond the Licensing Frame

The honest conclusion is uncomfortable. Licensing, whether collective, extended, compulsory, or private, assumes a transaction can occur. A buyer pays a fee, a rightsholder receives it, and the exchange is logged. For this to work at the scale of AI training, three conditions must be met:

the content must be identifiable,

the creator must be reachable,

and the payment must be worth more than the cost of routing it.

For the vast majority of visual content ingested into AI models, none of these conditions hold.

Provenance technology (C2PA manifests, steganographic watermarks) could address the identification problem going forward. But these require action at the point of creation. They cannot retroactively tag images already inside deployed models. The one standard that can work after the fact is the ISCC (ISO 24138), which generates a similarity-preserving fingerprint directly from the content itself. No prior embedding required. A creator can generate an ISCC code from their portfolio today; if an AI developer discloses their training data, matching codes can be produced from that dataset. Modified, compressed, or reformatted copies still cluster.

The TDM·AI protocol is already building on ISCC to bind opt-out and licensing declarations to content-derived identifiers using verifiable credentials. This is the closest thing that exists to a retroactive identification solution , but it still requires the transparency mandate the EU is demanding and has yet to enforce. Even with perfect identification, the economics of micro-distribution remain punishing. A CMO that knows exactly which 50,000 images from a given photographer were used in a training run still has to route a payment that, divided across billions of total images, amounts to fractions of a cent.

Some observers have proposed protocol-level filtering or decentralized ledger systems that could automate micro-transactions without CMO overhead. These are interesting technical ideas. They are also years from deployment at scale, and they depend on AI developers voluntarily complying with systems designed to cost them money.

The EU’s territorial enforcement clause, holding non-compliant models liable if they operate in Europe, is the only mechanism on the table that creates genuine economic pressure. If an AI model trained on unlicensed European content can be barred from the EU market, the cost of non-compliance becomes concrete. The question is whether the Commission will actually enforce it, and whether enforcement will benefit individual creators or simply create a new revenue stream for whoever administer the fines.

What Remains

The Voss resolution is a political signal, not an operating system. The US private market is building efficient infrastructure for a clientele that excludes most visual creators by design. And the UK, the jurisdiction with the strongest case for licensing-first, just published a 125-page report that commits to….. nothing.

The real work is recognizing that all three models are built for intermediaries, CMOs in Europe, data brokers in America, consultants and working groups in the UK , and that the creator sits at the end of a chain that extracts value at every link. The technology to make transparent, creator-direct remuneration possible exists in nascent form. The regulatory will to mandate it is emerging. The economic model that connects the two has not yet been invented.

That is the gap. Not a policy gap or a technology gap but a market design problem. Someone needs to build the system that makes a $0.003 payment worth routing, and that probably means rethinking the unit of transaction entirely. Aggregate rights pools, minimum distribution thresholds, creator-owned cooperatives with antitrust exemptions, platform-level levies redistributed through transparent algorithmic matching, these are directions, not solutions. But they at least acknowledge the scale of the problem rather than pretending that existing structures can absorb it.

Until then, collective licensing will remain what it is today: a promise that sounds like justice and functions like a tax on an industry that can no longer afford one.

Author: Paul Melcher

Paul Melcher is a highly influential and visionary leader in visual tech, with 20+ years of experience in licensing, tech innovation, and entrepreneurship. He is the Managing Director of MelcherSystem and has held executive roles at Corbis, Gamma Press, Stipple, and more. Melcher received a Digital Media Licensing Association Award and has been named among the “100 most influential individuals in American photography”