Fifteen months ago, right here in Kaptur, I asked a simple question: who actually needs a video generation tool? Not movie makers. Not documentarians. Not the millions of people who already have one of the most powerful video-creation instruments ever invented: a phone in their pocket. My argument was straightforward: most people don’t produce videos for a living, and the videos they do create are recordings of real things happening around them. Family gatherings, concerts, random moments. Acts of witnessing.

Last week, OpenAI answered my question by shutting down Sora.

The app that was supposed to be the “TikTok of artificial intelligence” went from number one on the App Store to being discontinued in six months. Global users peaked at around one million, then fell below 500,000 , even as the app actively encouraged people to upload their own faces and create elaborate scenes. Total lifetime revenue: $2.1 million. Estimated daily compute costs: $15 million. OpenAI’s $1 billion deal with Disney, which would have let users generate videos with over 200 copyrighted characters, collapsed alongside it. No money ever changed hands.

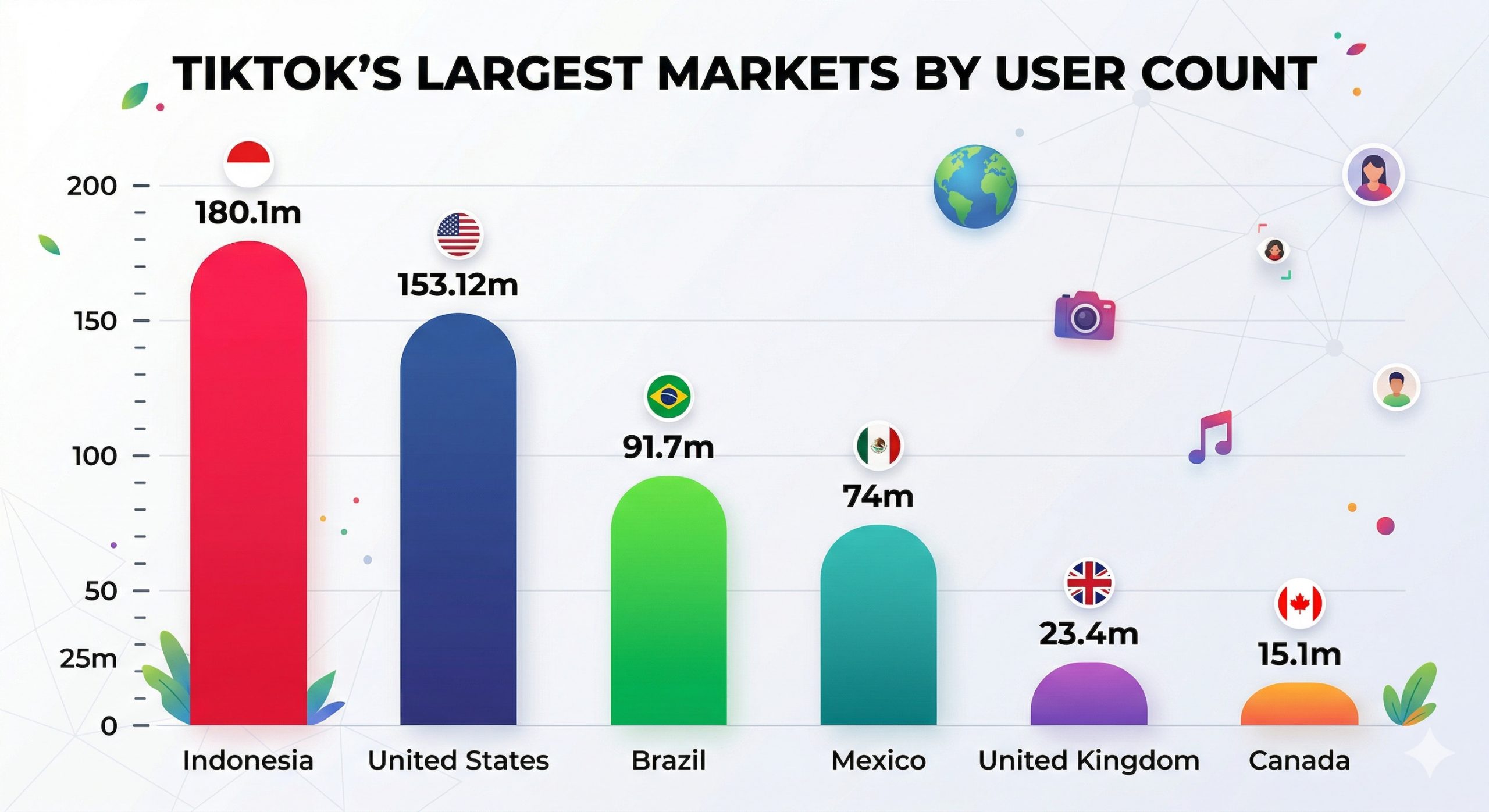

To understand the scale of this mismatch, consider what happened on the other side of the video landscape during the same period. TikTok, a platform built entirely on people filming real things with their phones, reached 1.9 billion monthly active users and is approaching $33 billion in annual revenue. YouTube remains the world’s second-largest search engine. The appetite for video has never been greater. What people don’t have an appetite for, it turns out, is generating videos from prompts.

The broken toy

The pattern of Sora’s decline tells its own story. Downloads dropped 45% by January 2026, consumer spending fell from a peak of $540,000 in December to $367,000 in January, and by the time of the shutdown announcement, the app had fallen to 101st on the App Store. The classic novelty curve: initial curiosity, enthusiastic experimentation, rapid abandonment.

But there’s something more fundamental at work here than novelty wearing off. AI video generation requires something most people simply don’t have: a story to tell.

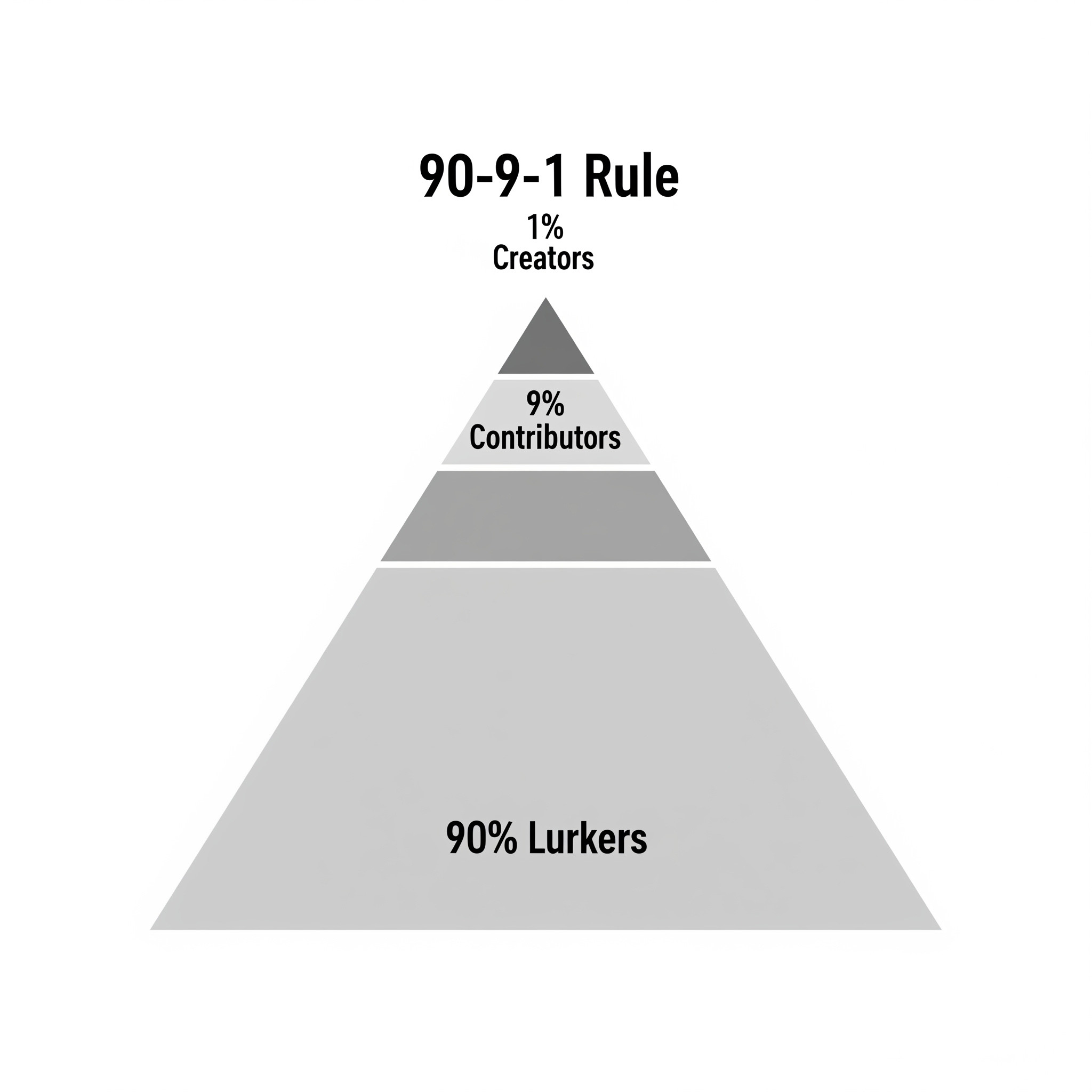

There’s a well-documented principle in online communities known as the 90-9-1 rule, first articulated by Jakob Nielsen and confirmed across two decades of research: in any online community, roughly 90% of users are lurkers who consume content without contributing, 9% engage occasionally, and only 1% actively create. The exact ratios vary, some researchers have found distributions as extreme as 99-1-0.1, but the fundamental pattern holds across every platform studied. The vast majority of people are consumers of content, not producers.

This isn’t laziness. It reflects a basic reality about human beings: most people are not storytellers. They don’t have the urge to construct narratives, the instinct to build a scene from nothing, or the skills to translate an idea into a compelling sequence of images and sound. These are specialized abilities, craft, in the truest sense of the word, developed through years of practice by the small percentage of people who become creators, filmmakers, and visual storytellers. Storytelling is rare, and because it’s rare, it’s valued. That’s why Hollywood is a multi-billion-dollar global industry: we pay handsomely for the people who can do what most of us cannot.

AI video generation tools like Sora assumed that the barrier to video creation was technical: if only everyone could generate video from a text prompt, everyone would. What they failed to grasp is that the barrier was never technical. It was creative. Most people don’t make videos because they don’t have videos to make. They don’t have stories burning to get out. Unless we start teaching story creation and film language in schools globally, replacing composition class with visual narrative, this won’t change. And there’s no sign of that happening.

The copyrighted character explosion on Sora proves this point precisely. When CNBC gained access to the app, what they found wasn’t a platform of original storytelling. It was a platform flooded with SpongeBob, Pikachu, Rick and Morty, characters from Despicable Me, and other people’s intellectual property put into absurd scenarios. 404 Media described opening the app and immediately encountering Pikachu stealing from a CVS and SpongeBob in a Nazi uniform. Even Sam Altman himself appeared in a user-generated video standing in a field with Pokémon characters, captioned “I hope Nintendo doesn’t sue us.”

This is the signature of a toy. Users didn’t have their own stories to tell, so they played with existing characters, someone else’s creative work, because the raw material of storytelling wasn’t there ( thus the interest from Disney). However, when OpenAI tightened copyright controls in response to studio backlash, usage dropped further. Take away the most amusing trick, and people put the toy down.

We miss our mirror neurons

The entire value engine of social video, from TikTok to YouTube to Instagram Reels, runs on a premise so fundamental that it’s easy to overlook: someone was there. Someone held the camera. Someone chose what to film. Someone had a reaction, a point of view, a body in the scene. The creator is the content.

Every dominant social video format reinforces this. Reaction videos. Day-in-my-life vlogs. Unboxings. Behind-the-scenes footage. Travel content. Cooking demos. Livestreams. What they all share is a human who experienced something and chose to share it. Even in a MrBeast video, loaded with special effects and elaborate production, the draw is that he is experiencing the event.

But the connection runs deeper than mere presence. It’s because someone was there that we can connect. The creator becomes the “I” we could not be, the person who went to that place, tried that food, and had that reaction. There is a dimension to the content because it was lived by someone through whom we can relate. Neuroscience has a term for this mechanism: mirror neurons, cells that fire when we watch someone else perform an action, allowing us to feel, at a physiological level, what they feel. This is the engine of empathy, and it only works when there’s a real person on the other end.

Here’s the critical point: even if an AI-generated video looks exactly as though it happened, that’s insufficient. We disengage the moment we know it didn’t, or even when we merely suspect it didn’t. The connection requires belief in a lived experience. A perfect simulation of a sunset means nothing if nobody stood in front of it. And audiences can tell the difference, or, increasingly, they assume the worst and withhold their trust preemptively.

OpenAI understood this problem intuitively, even if they couldn’t solve it. Sora’s signature feature, the “cameo” system that let users scan their faces and insert themselves into generated videos, was an implicit admission that the tool needed a human presence to feel compelling. The generated video on its own wasn’t enough; it needed you in it. But grafting a face onto a synthetic scene isn’t the same as being there. It’s a deepfake of witnessing. The empathic bridge requires a real experience on the other end, and no amount of facial mapping can fabricate that. Audiences saw through it, which is one reason the feature failed to sustain engagement.

The message from audiences is consistent: we trust people we recognize. Provenance, at the consumer level, has nothing to do with metadata or file formats. It means knowing who made this. A known creator is a trust anchor: a human whose identity, history, and perspective guarantee authenticity. When audiences follow a creator, they are placing a bet on that person’s continued realness. AI-generated video can’t participate in that bet because there’s nobody on the other side of it.

The Camera Was Already The Right Tool

Consider what a smartphone camera actually is. It’s fast, always available, and it produces content that carries the implicit authority of having been there. When you film your child’s first steps, you’re creating a record that this moment happened, and you witnessed it. When a creator films a street food stall in Bangkok, they’re producing testimony, evidence of presence in a specific place at a specific time. A generative AI tool cannot fulfill that function, because the event it depicts didn’t happen. For most people, filming is a social act, an act of witnessing and remembering, and the camera already does everything they need.

For the small minority of creators, AI video generation poses a different problem: it removes the very thing that gives their work value. On TikTok, learning complex editing techniques, mastering transitions, and perfecting a lighting setup are acts of craft that audiences recognize and reward. Effort is currency in the creator economy. When a creator spends ten hours editing a three-minute vlog, that labor is visible, and the audience’s appreciation of it is part of the relationship. If the same video can be generated by a prompt in thirty seconds, the perceived value and the social proof of dedication evaporate. The creator’s competitive advantage is their skill, their perspective, and their willingness to do the work. AI generation strips all three.

The Professional Carve-Out

None of this means AI video generation has no future. It clearly does: as a professional production tool. Pre-visualization for filmmakers. Background generation for VFX. Hyper-personalized product ads at scale. B-roll replacement (where it threatens stock footage more than it threatens creators). These are legitimate, high-value applications.

But they’re instruments for skilled operators, the creative professionals I described in my original article. People who already know how to tell compelling stories will use these tools to tell them faster and more cheaply. That’s a productivity gain, comparable to what Photoshop did for image editing or what Pro Tools did for music production. What it isn’t is a consumer revolution.

OpenAI learned this the hard way. Sora was consuming extraordinary computing resources to deliver a consumer product with no clear path to profitability. The company chose to shut it down and redirect those resources toward enterprise productivity tools and robotics research. That decision tells you everything about where the actual demand lies.

Where This Leaves Us

The shutdown of Sora is a market verdict, and the verdict is clear. Consumers don’t need to generate video. They need to film things, share experiences, and connect with other humans who are doing the same. The platforms that facilitate this, TikTok with 1.9 billion users, YouTube with its creator ecosystem, and Instagram with its community tools, continue to grow. The platform that tried to replace the human witness with a text prompt lasted six months.

For the visual content industry, the implications run deeper than a single product failure. As AI floods feeds with synthetic content, what audiences are now calling “slop“, the value of verified human-origin content will only increase. Trust accrues to known creators, to recognizable human voices, to content that carries the trace of someone who was actually there. The brands, platforms, and creators who understand this will invest in that human signal. The ones who chase the efficiency promise of generated content will discover what OpenAI just discovered: you can produce spectacle, but you cannot produce testimony.

And testimony, the evidence that a human witnessed something, is what audiences actually want.

Author: Paul Melcher

Paul Melcher is a highly influential and visionary leader in visual tech, with 20+ years of experience in licensing, tech innovation, and entrepreneurship. He is the Managing Director of MelcherSystem and has held executive roles at Corbis, Gamma Press, Stipple, and more. Melcher received a Digital Media Licensing Association Award and has been named among the “100 most influential individuals in American photography”